What is Time Complexity in Data Structure

When working with data structures and algorithms, one of the most critical aspects to consider is time complexity. It helps developers evaluate and compare the efficiency of different algorithms. Whether you're preparing for coding interviews or optimizing software performance, understanding time complexity is essential.

In this article, we'll dive deep into what time complexity is, why it matters, and how to analyze it effectively with examples.

Understanding Time Complexity

Definition

Time complexity refers to the amount of time an algorithm takes to complete as a function of the input size (n). It allows us to measure an algorithm’s efficiency without worrying about hardware or language-specific optimizations.

Why is Time Complexity Important?

Notations in Time Complexity (Big O Notation)

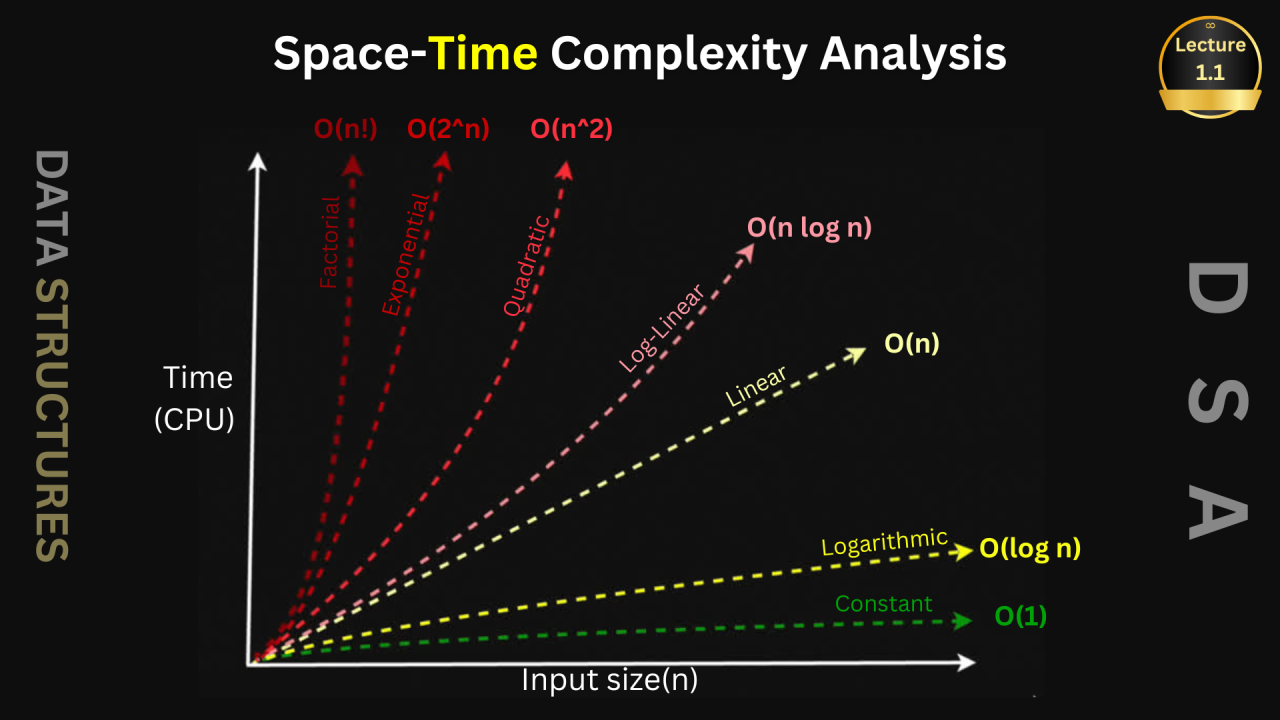

To express time complexity, we use Big O notation, which provides an upper bound on the growth rate of an algorithm’s runtime.

Common Big O Notations:

How to Calculate Time Complexity

To determine an algorithm’s time complexity, follow these steps:

Example 1: Finding the Minimum Element in an Array

public static int findMin(int[] arr) {

int min = arr[0];

for (int i = 1; i < arr.length; i++) {

if (arr[i] < min) {

min = arr[i];

}

}

return min;

}

Complexity Analysis:

Example 2: Checking for Duplicates in an Array (Nested Loop)

public static boolean hasDuplicate(int[] arr) {

for (int i = 0; i < arr.length; i++) {

for (int j = i + 1; j < arr.length; j++) {

if (arr[i] == arr[j]) {

return true;

}

}

}

return false;

}

Complexity Analysis:

Best Practices for Optimizing Time Complexity

What is Time Complexity in Data Structure

What is Space Complexity in Data Structure?

When we analyze an algorithm's efficiency, we usually focus on time complexity, measuring how fast it runs. However, an equally important factor is space complexity, which determines how much memory an algorithm consumes. Understanding space complexity is crucial for optimizing applications, reducing memory overhead, and ensuring efficient use of system resources.

In this article, we'll break down the concept of space complexity, why it matters, how it is calculated, and its impact on real-world applications.

What is Space Complexity?

Space complexity refers to the total memory an algorithm requires to run, including both input-dependent memory and fixed memory usage. It is usually expressed using Big O notation to describe how memory consumption grows concerning input size.

Components of Space Complexity

How to Calculate Space Complexity?

To compute space complexity, consider:

Example 1: Space Complexity of an Array

Consider an array of size n:

int arr[n];

Example 2: Space Complexity of Recursion

A recursive function:

void recursiveFunction(int n) {

if (n == 0) return;

recursiveFunction(n - 1);

}

Example 3: Space Complexity of Iteration

Consider an iterative approach:

void iterativeFunction(int n) {

for (int i = 0; i < n; i++) {

System.out.println(i);

}

}

Common Space Complexities in Data Structures

Optimizing Space Complexity

1. In-Place Algorithm (O(1) Space)

2. Tail Recursion Optimization

3. Using Bit Manipulation

4. Efficient Data Structures

Real-World Applications of Space Complexity

Conclusion

Time complexity is a crucial concept in data structures and algorithms that helps developers understand and optimize performance. By mastering Big O notation, analyzing algorithms, and implementing optimization techniques, you can write efficient code that scales well with increasing input sizes.

Understanding time complexity is not just useful for coding interviews but also for real-world applications where performance is a key factor.

Understanding space complexity is crucial for designing efficient and scalable applications. Whether optimizing recursive calls, selecting data structures, or implementing in-place algorithms, memory-efficient approaches can significantly enhance performance. By mastering space complexity, developers can build software that runs faster, consumes less memory, and scales efficiently.

Do you have any favorite tricks for optimizing time complexity? Share your thoughts in the comments!