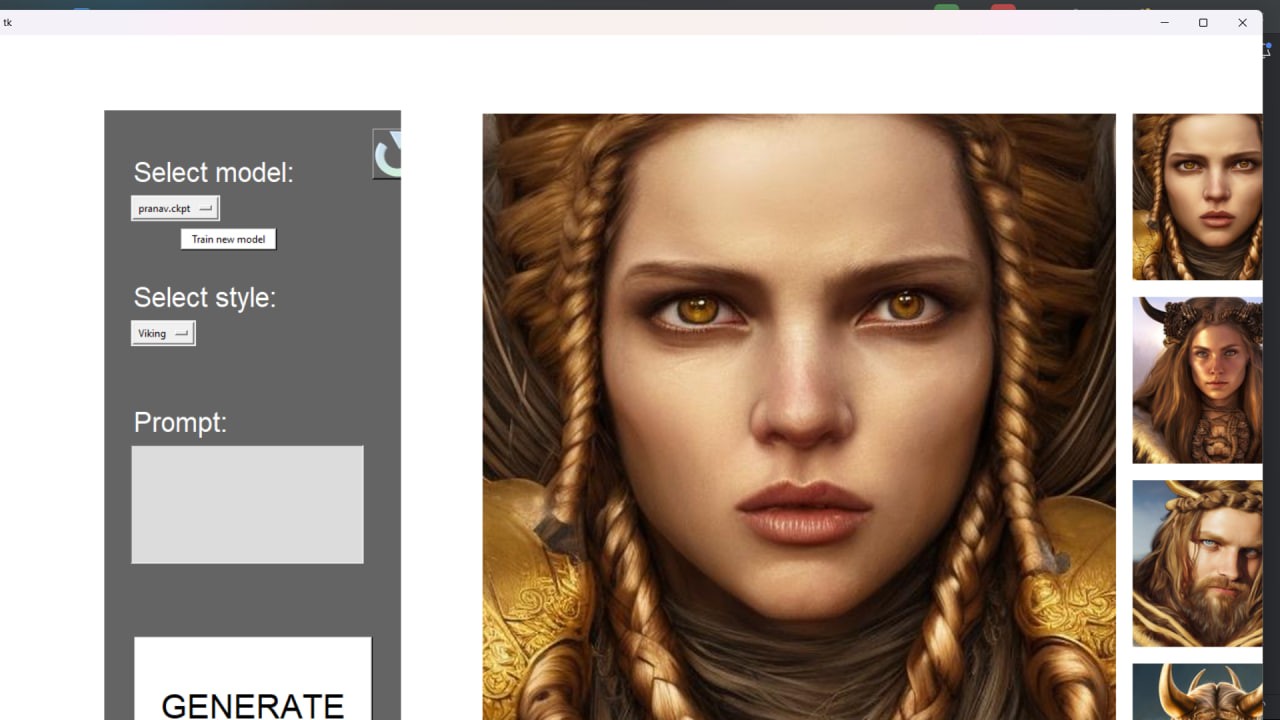

Image Generation Using Python:

Project Description:

This project involves in setting up a machine learning pipeline that utilizes AWS services for storage (S3) and queueing (SQS), along with GPU-accelerated computing resources (RTX A6000 pod) for running computer vision models. The specific model being used is related to stable diffusion and regularization techniques for image processing, likely involving techniques from deep learning and generative models. The final goal seems to be the execution of the main.py file, which presumably triggers the model training or inference process.

AWS services required:

Amazon S3 (Simple Storage Service):

Use: S3 is used to store data, including model checkpoints, training images, and any other relevant files needed for the machine learning pipeline. It provides scalable, durable, and highly available object storage, making it suitable for handling large datasets and model artifacts.

Amazon SQS (Simple Queue Service):

Use: SQS is employed as a message queue to facilitate communication between different components of the system, such as triggering model training or inference tasks. The FIFO (First-In-First-Out) queue type with content-based deduplication ensures that messages are processed in the order they are received and prevents duplicate messages from being processed, ensuring reliable and orderly execution of tasks.

AWS IAM (Identity and Access Management):

Use: IAM is utilized for managing access to AWS services securely. An IAM user is created with specific access policies granting permissions to interact with S3 and SQS resources. This ensures that only authorized users or services can access the necessary resources, enhancing security and compliance.

RunPod:

Use: RunPod is utilized as the deployment environment for the machine learning model. It offers secure cloud infrastructure, possibly with specialized hardware configurations like the RTX A6000 pod mentioned in the project setup. This infrastructure is crucial for running computationally intensive tasks efficiently, especially those involving deep learning models that benefit from GPU acceleration.

Python:

Use: Python is the primary programming language used for implementing the machine learning pipeline and executing various scripts in the project. It's used for tasks such as:

-Cloning Git repositories (git clone commands).

-Installing dependencies (pip install commands).

-Executing Python scripts (python commands).

-Configuring and updating credentials and variables files.

Recommended by LinkedIn

-Running the main machine learning pipeline (execute_pipeline.py, main.py, etc.).

Execution process:

Setup AWS

Setup RunPod

python app

Under The Guidelines of :

Details:

P Pranav Teja

ID:2100032273