Asynchronous Request-Reply Pattern:

Decouple backend processing from a frontend host, where backend processing needs to be asynchronous, but the frontend still needs a clear response. Reference: Microsoft Docs

So we will start from this statement. I would like to explain about one API design pattern that we can rely on for long running processes(You may be already using it in your application). We will also see when to use as well as not to use this pattern

Context and The Real Problem:

It's very normal for client applications running in a web-client (browser) to depend on remote APIs to provide business logic and compose the functionality. These APIs may be directly related to the application or may be shared services provided by some third party. Commonly these API calls take place over the HTTP(S) and follow REST semantics.

In ideal cases, APIs for a client application are designed to respond quickly, on the order of 100 milliseconds or less. Many factors can affect the response latency, including:

> Application's hosting stack.

> Security components.

> The relative geographic location of the caller and the backend.

> Network infrastructure.

> Current load.

> The size of the request payload.

> Processing queue length.

> The time for the backend to process the request.

Some can be mitigated by scaling out the backend. Others, such as network infrastructure, are mostly out of the control of an application developer. Most APIs can respond quickly enough for responses to arrive back over the same connection. Application code can make a synchronous API call in a non-blocking way, giving the appearance of asynchronous processing, which is recommended for I/O-bound operations.

Recommended by LinkedIn

In some scenarios, however, the work done by backend may be long-running, on the order of seconds, or might be a background process that is executed in minutes or even hours. In that case, it isn't feasible to wait for the work to complete before responding to the request. This situation is a potential candidate for any synchronous request-reply pattern.

And Solution:

One solution to this problem is to use HTTP polling. Polling is useful to client-side code, as it can be hard to use long running connections.

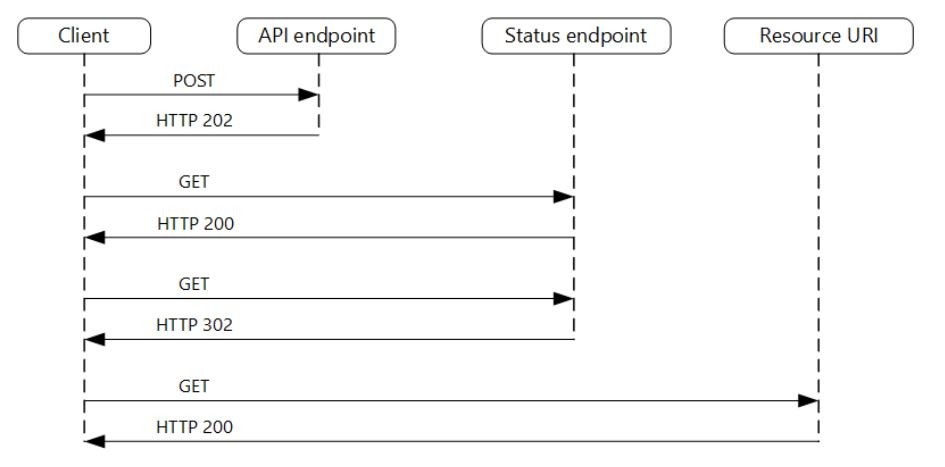

Following are the step by step events for this pattern.

o. The client application makes a synchronous call to the API, triggering a long-running operation on the backend. The API responds synchronously as quickly as possible. It returns an HTTP 202 (Accepted) status code, acknowledging that the request has been received for processing. The API should validate both the request and the action to be performed before starting the long running process. If the request is invalid, reply immediately with an error code such as HTTP 400 (Bad Request).

o. The response holds a location reference pointing to an endpoint that the client can poll to check for the result of the long running operation.

o. The API offloads processing to another component, such as a message queue(Queue Based Load Leveling Pattern). This another pattern that we will discuss in later articles.

o. For every successful call to the status endpoint, it returns HTTP 202. While the work is still pending, the status endpoint returns a resource that indicates the work is still in progress. Once the work is complete, the status endpoint can either return a resource that indicates completion, or redirect to another resource URL. For example, if the asynchronous operation creates a new resource, the status endpoint would redirect to the URL for that resource.

Use this pattern for

Not use this pattern

Please refer Azure Logic App as a reference implementation of this pattern

This is an insightful read to understand the logic behind the request and response calls. Also, many mainstream web applications are still facing performance issues, and it’s good to know about possible causes and solutions.

Hi, thanks for sharing this. Exactly in azure login app, in which calls it is implemented, can we see this